Data Bugs & How to Fix Them

- Guy Smoilovsky

- 6 min read

- 5 years ago

Co-Founder & CTO @ DAGsHub

As data scientists know from painful experience, bugs in the code usually aren't the big difficult problem. Data itself contains bugs, in the terms of the classic definition of bugs - unexpected behaviors, usually resulting from mistaken assumptions.

In this post, I'd like to share my personal story of a data bug, which is very indicative of the types of bugs you will come across in data science work.

At DAGsHub, we're creating tools to make these bugs easier to fix. I'll show how I applied these tools in this concrete case. Fixing data bugs is a challenging process and the tools aren't as amazing as we believe they could be. We're on it though! 😉

Background

The first tutorial we made for DAGsHub was a “standard” MNIST tutorial.

It was nice and simple - maybe too simple! A lot of users remarked that they're sick and tired of MNIST tutorials, that they don't represent reality at all - the data is already clean, balanced, labeled, and uniform. It's just straight-up images. Seeing an MNIST tutorial immediately puts them off since they feel you can make anything look good with MNIST, whether it's actually good or not.

So, we decided to create a new tutorial, based on real-world data. We decided to use the StackExchange API to generate a CSV file consisting of data from a lot of questions, specifically from CrossValidated which deals with questions about statistics. The questions include both text and tabular metadata. The label to predict was "is this tagged as a machine learning question or not?", which you can easily imagine as either a recommender system for the user writing the question or an engineered feature used in some backend BI or automation. The labels were also very unbalanced, with only 10% positive labels.

In short, this new tutorial represented reality much more faithfully than MNIST.

The problem

When I generated the original data, I didn't think about sorting by date - I just mistakenly assumed that I'll get back all the questions from the site's history or the latest questions. Oops. I think we've all been there at some point.

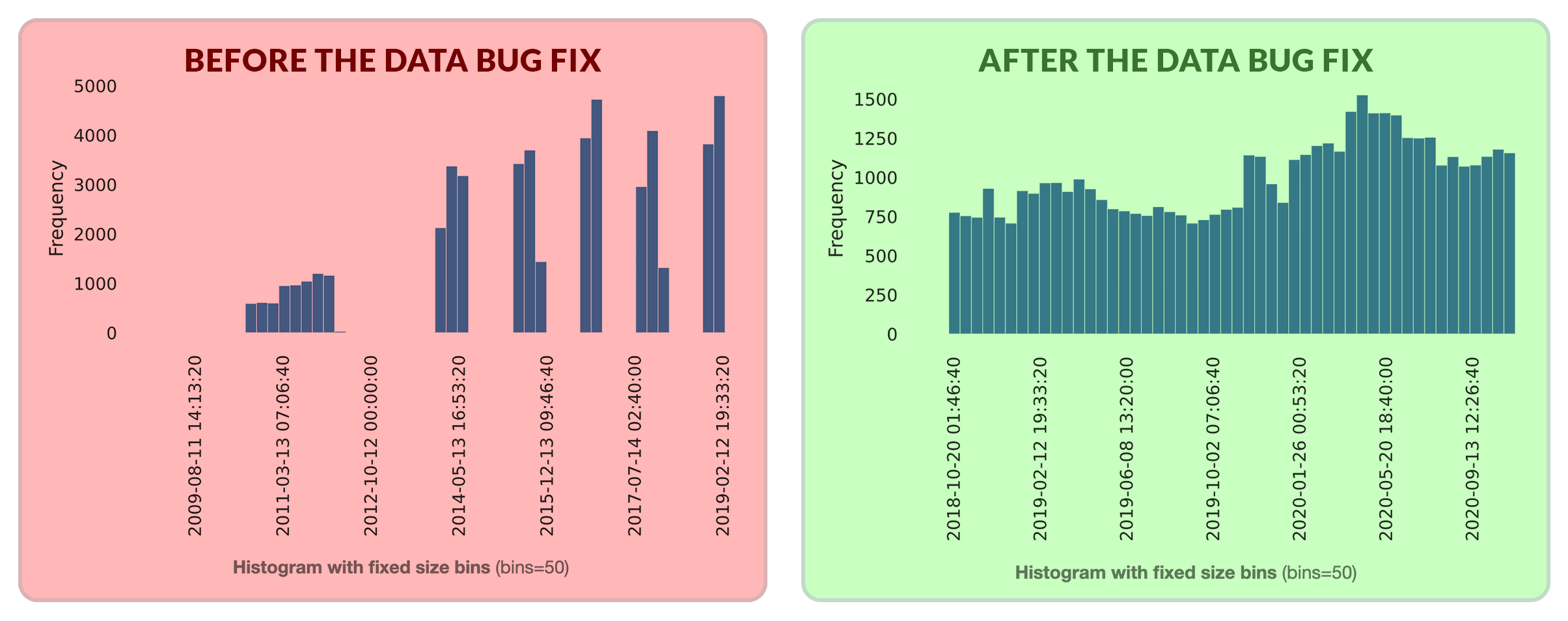

What I ended up getting is 50,000 samples, the upper limit of what the API is willing to return. Worse yet, the samples were not well distributed across time! If it had been a uniformly random selection, that would have been better. But I wasn't even that lucky. I don't know what weird sampling resulted in the time distribution that I actually got:

The bottom line - although I successfully built a decent model on top of the data, the data I used was broken. I had an entirely data related bug, and no change in my code was going to fix it. I needed to replace the data completely. In other cases, maybe I would have discovered that there is a subset of the data which just needs to be thrown out manually. These kinds of stories are very common in real life.

What can be done in this scenario on Github?

- Case 1: The data is stored in some external storage, like S3, and a URL to download it is saved as part of the code on Github. In such a case I could go to the bucket and replace the data stored there. However, I would lose the old data, and maybe even worse, I would lose the relationship between the versions of the data and my existing code! Later on, figuring out which versions of my code, experiments, and resulting models originated from the broken data becomes exceedingly difficult. I'm likely to forget, and even if not, what happens when I'm on vacation or leave the company? Whoever tries to pick up my work is set up for failure.

- Case 2: What if I create a new version of the data on S3, name it

data_v2.csv, and change the URL in my code? Welcome to Versioning Hell:

- Case 3: We use Git-LFS. Git-LFS is an extension to Git that allows you to efficiently version large files. It's not completely a part of Git - things like diffs and merges aren't supported, since it's too expensive to compute them on large data. What's more, you need a specialized server that can handle LFS. Luckily, GitHub does that. Unluckily, there are still problems - the amount of storage you get for your LFS is limited, and it's hardly a full-fledged data storage solution. I would much rather store these large files on some proper cloud storage, and find a convenient way to link them to my Git repo. What's more - GitHub provides very limited information on what has changed when you try to compare versions of these large files. A line-by-line diff is not practical, but I feel like there are more useful things to say than this:

Even if all the above technical issues are solved, there's one human aspect that's completely ignored - what is the impact of changing the data? OK, the file changed, but how does this affect my metrics? Is the data distributed differently now? Do I have visualizations I want to see before-and-after? In vanilla Git and Github, I can only meaningfully compare text and a few images.

What can you do on DAGsHub?

We rely on DVC to connect your data files to your Git commits - similar to Git-LFS, but much more flexible, allowing you to store your data on your own cloud storage, and have many other cool data-science specific features.

DAGsHub lets you see notebooks and intuitive notebook diffs, which help you understand the underlying data, and create a custom visual comparison of data versions. You can also view the contents of some data formats (more coming soon) as an integrated part of the UI. Comparing large datasets is also made possible by our Directory diffing capabilities. Finally, specifically for issues like this, we created Data Science Pull Requests, which lets you perform the same review process for data as you would with code.

With all these tools in hand, I can now just replace my data file with the new, corrected version, use DVC and Git to commit the new version, and voila! The data is now fixed...

...Well, almost. I still need to push the data to my remote, otherwise, the project is pretty useless to anyone not in possession of my laptop.

Setting up cloud storage is hard

OK, so now we can get a version-controlled pull request for data, with meaningful data science information to guide decisions. Our data is stored on full-fledged storage with world-scale capacity. Excellent!

But let's face it - setting up cloud storage is a pain in the neck. Credit cards? IAM? Bucket retention policies? Service accounts?

Blech 🤢 !

That's all way too hard. If we want open-source data science to flourish, we have to make it much more accessible. Well-intentioned people will want to help find and fix bugs, but we shouldn't force them to jump through hoops. Friction is a killer of creativity and productivity.

It should be as easy as git push, and access control should be handled automatically.

We're working hard at DAGsHub to make that a reality (stay tuned for more).

Summary

In real life, data is messy, and as much as you need to iterate on the code or the models, you need to iterate on your data and annotations. Reverting to old-school versioning hell is not a solution. Data should be treated as source code with its versions managed, and fixes to data should undergo the same review process that everything else does. More than that - to enable real open source, I want anyone to be able to contribute fixes to my data.